AGI Alignment Experiments: Foundation vs INSTRUCT, various Agent

Por um escritor misterioso

Descrição

Here’s the companion video: Here’s the GitHub repo with data and code: Here’s the writeup: Recursive Self Referential Reasoning This experiment is meant to demonstrate the concept of “recursive, self-referential reasoning” whereby a Large Language Model (LLM) is given an “agent model” (a natural language defined identity) and its thought process is evaluated in a long-term simulation environment. Here is an example of an agent model. This one tests the Core Objective Function

This AI newsletter is all you need #69

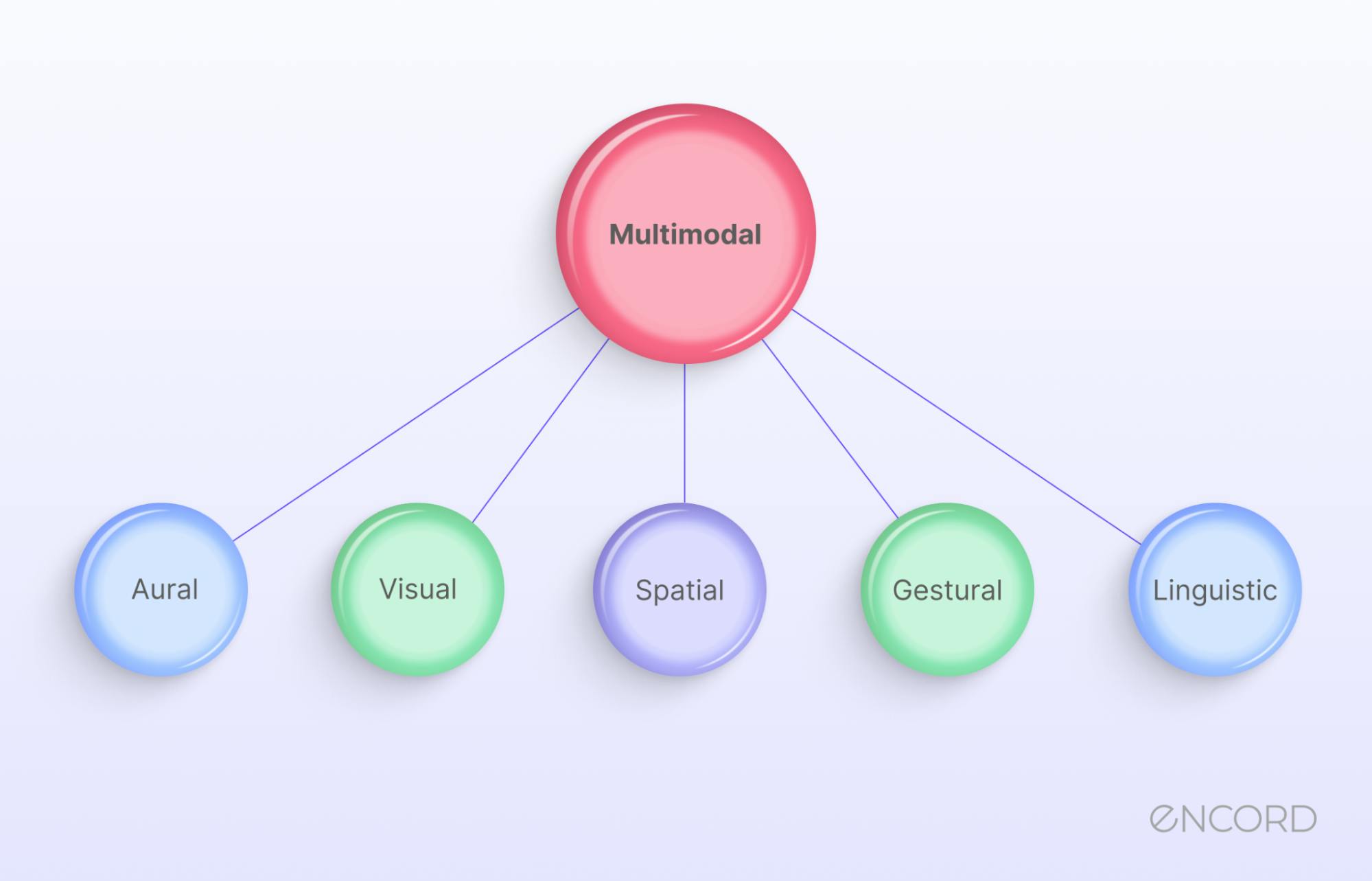

Multimodal Annotation Tools Top Tools

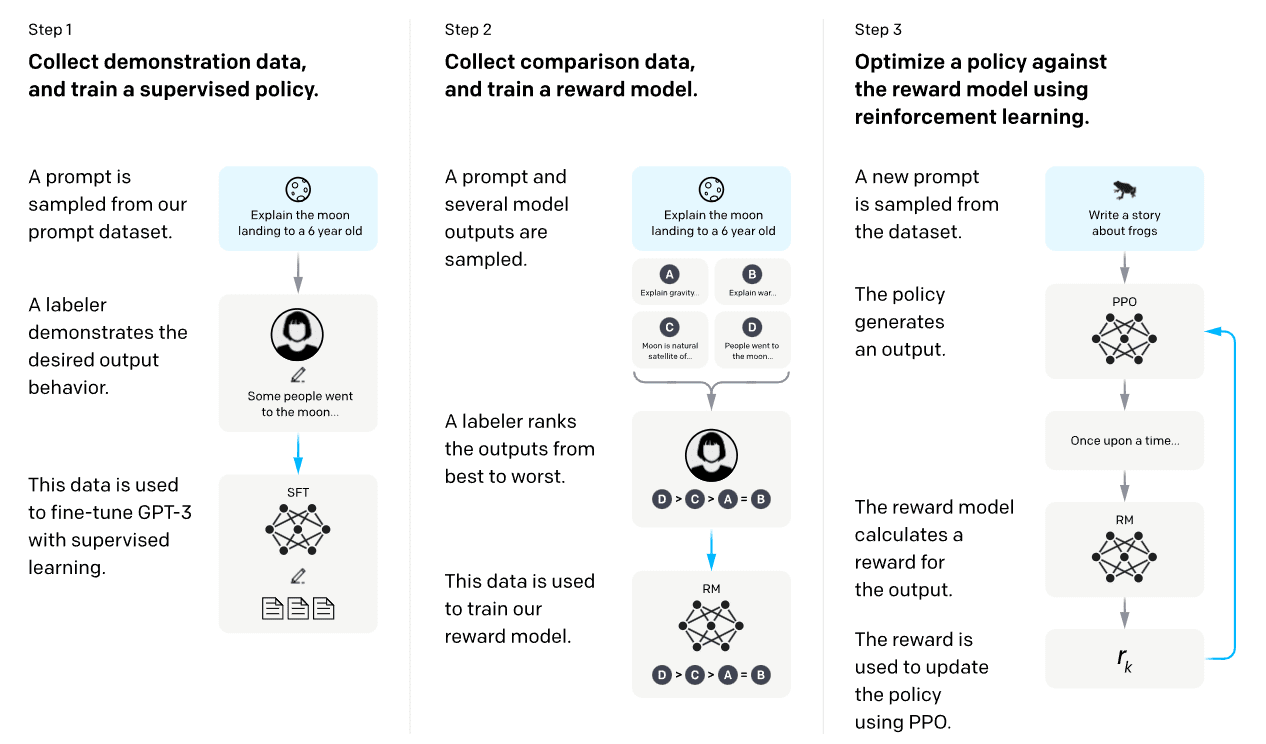

AI Safety 101 : Reward Misspecification — LessWrong

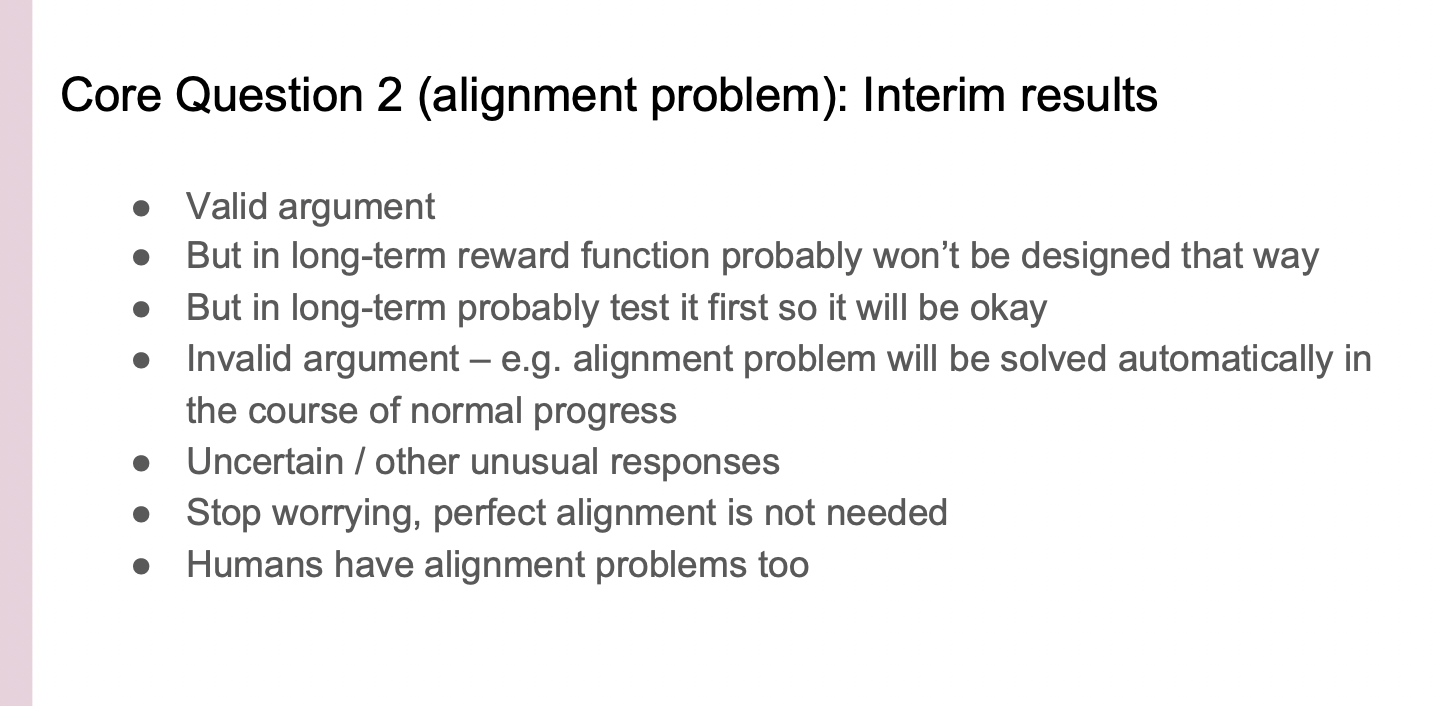

Vael Gates: Risks from Advanced AI (June 2022) — EA Forum

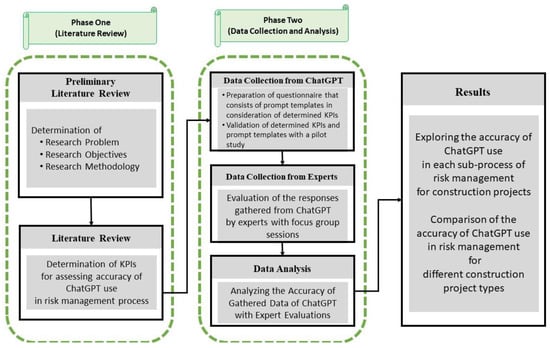

Sustainability, Free Full-Text

What Is General Artificial Intelligence (AI)? Definition, Challenges, and Trends - Spiceworks

Exploring AGI: The Future of Intelligent Machines

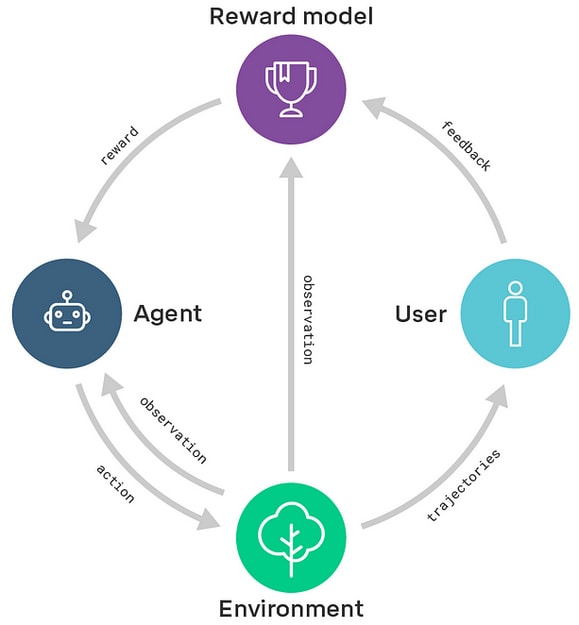

AI Safety 101 : Reward Misspecification — LessWrong

Operando Characterization of Organic Mixed Ionic/Electronic Conducting Materials

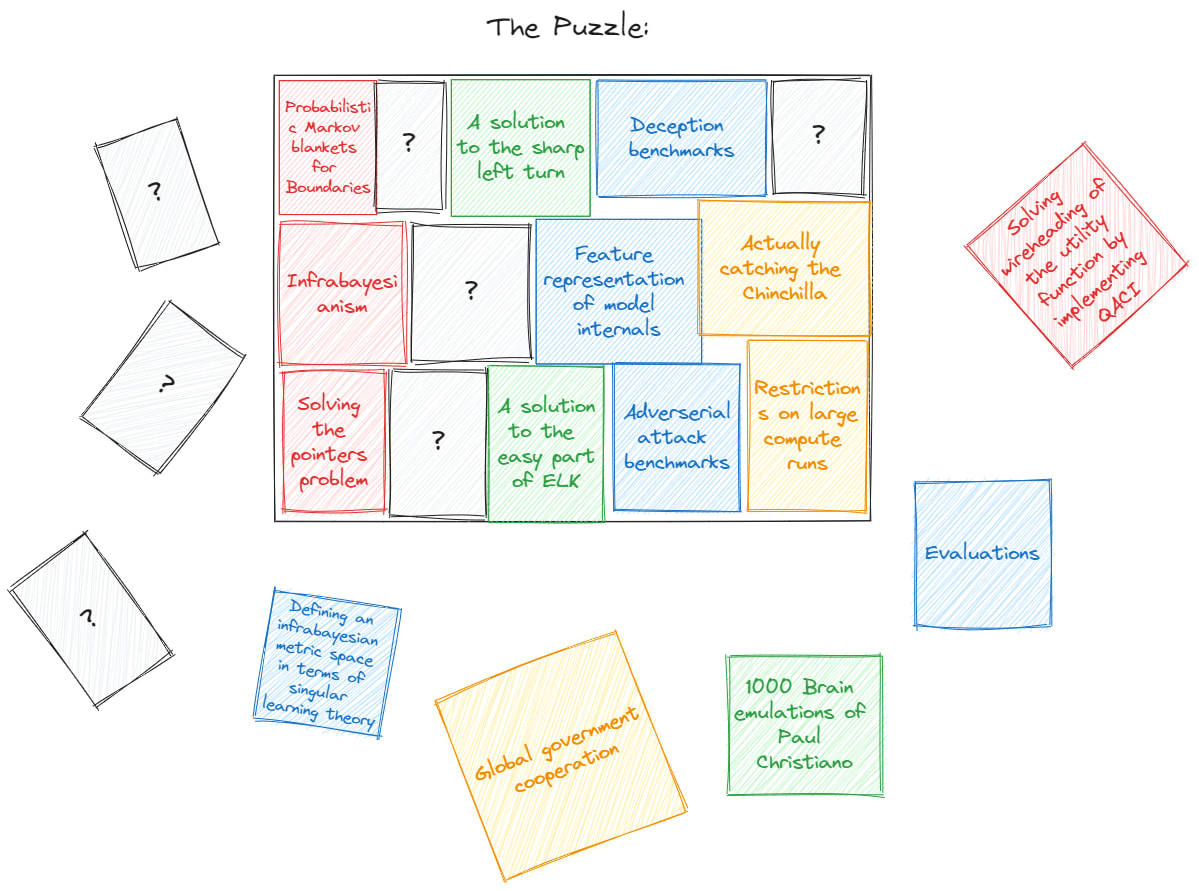

How well does your research adress the theory-practice gap? — LessWrong

When brain-inspired AI meets AGI - ScienceDirect

Examining User-Friendly and Open-Sourced Large GPT Models: A Survey on Language, Multimodal, and Scientific GPT Models – arXiv Vanity

The Translucent Thoughts Hypotheses and Their Implications — AI Alignment Forum

Simulators :: — Moire

GitHub - uncbiag/Awesome-Foundation-Models: A curated list of foundation models for vision and language tasks

de

por adulto (o preço varia de acordo com o tamanho do grupo)

/origin-imgresizer.eurosport.com/2023/02/03/3543057-72208308-2560-1440.jpg)