No Virtualization Tax for MLPerf Inference v3.0 Using NVIDIA Hopper and Ampere vGPUs and NVIDIA AI Software with vSphere 8.0.1 - VROOM! Performance Blog

Por um escritor misterioso

Descrição

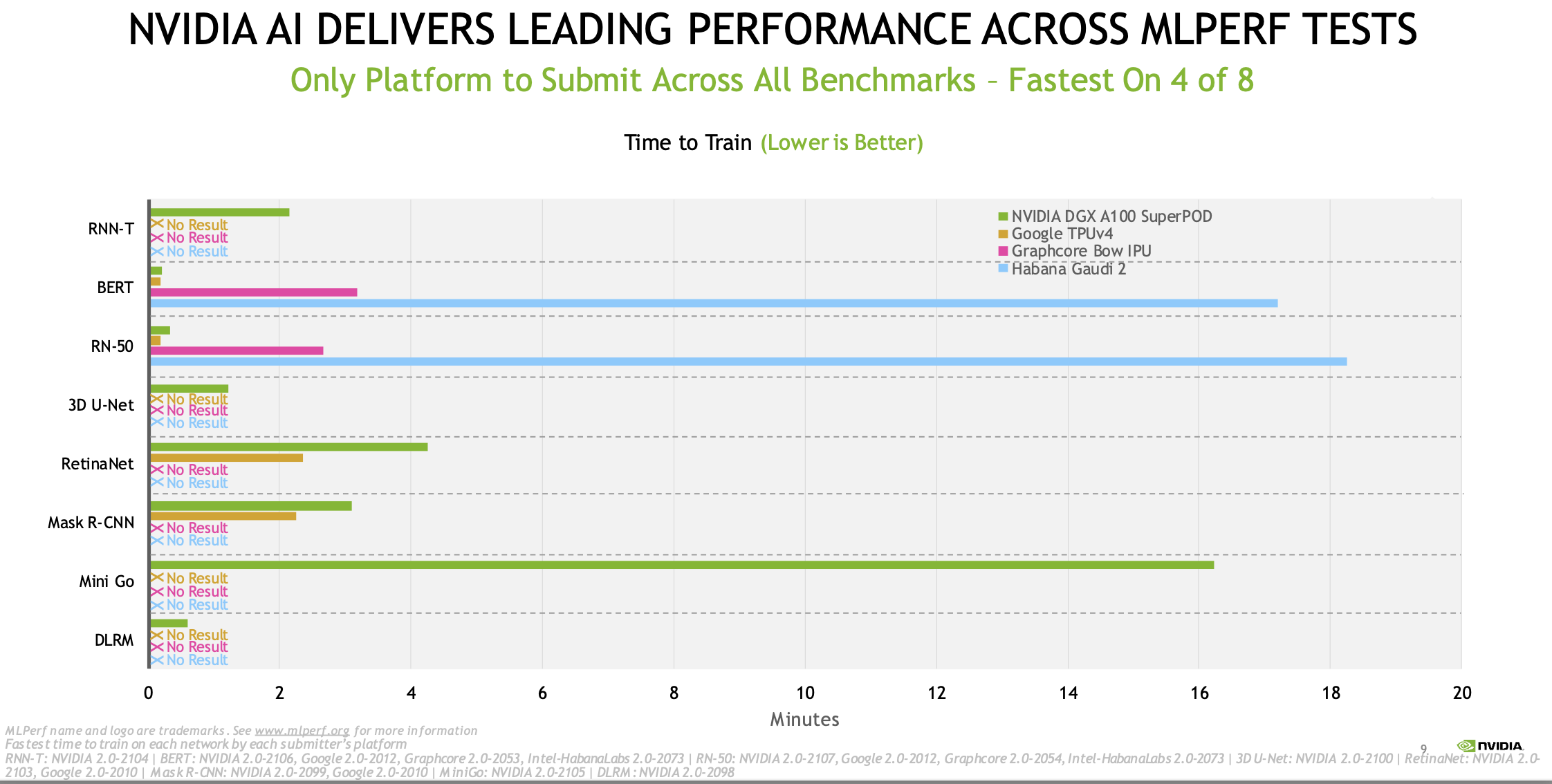

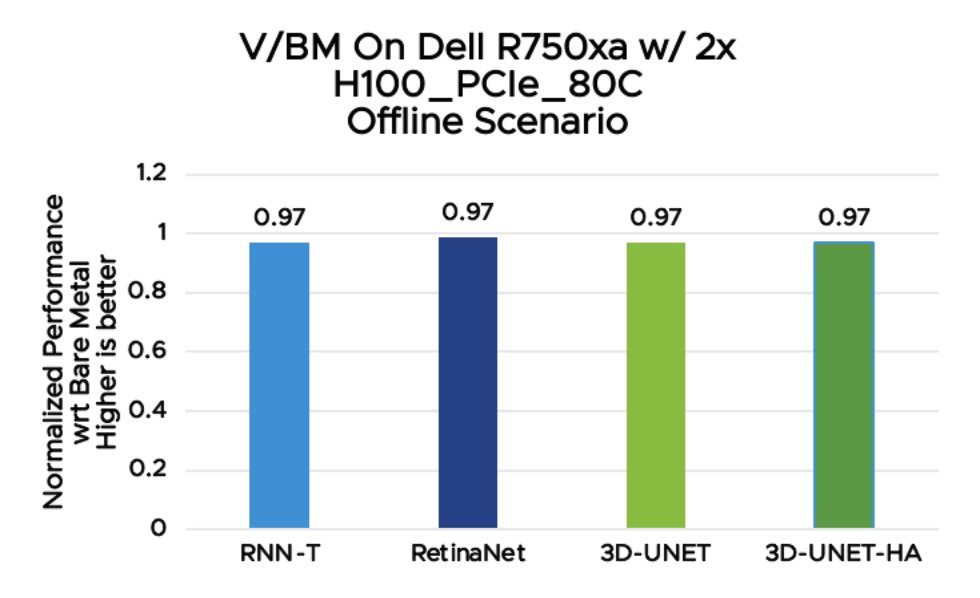

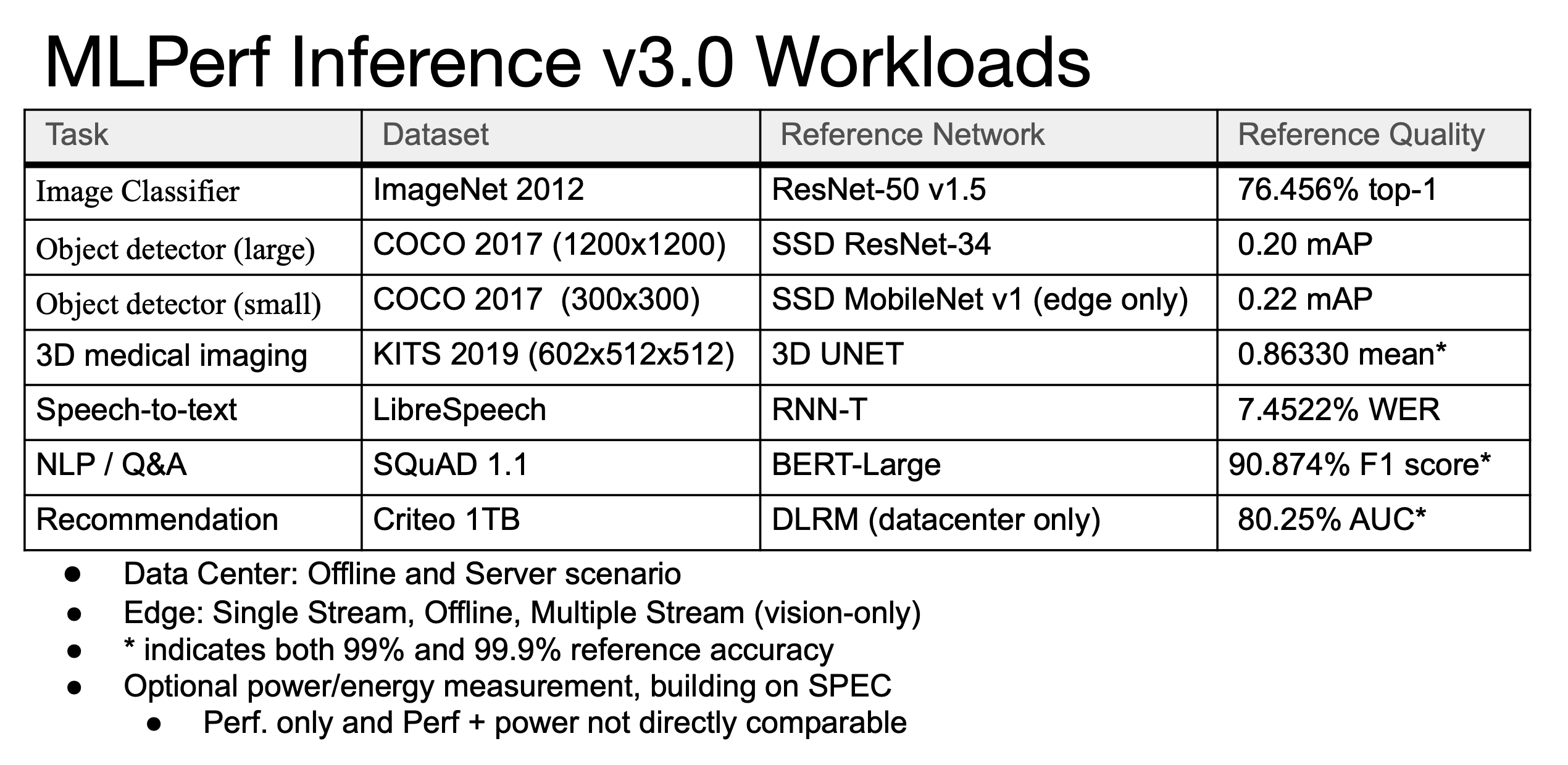

In this blog, we show the MLPerf Inference v3.0 test results for the VMware vSphere virtualization platform with NVIDIA H100 and A100-based vGPUs. Our tests show that when NVIDIA vGPUs are used in vSphere, the workload performance is the same as or better than it is when run on a bare metal system.

vSphere Archives - VROOM! Performance Blog

The Mainstreaming of MLPerf? Nvidia Dominates Training v2.0 but Challengers Are Rising

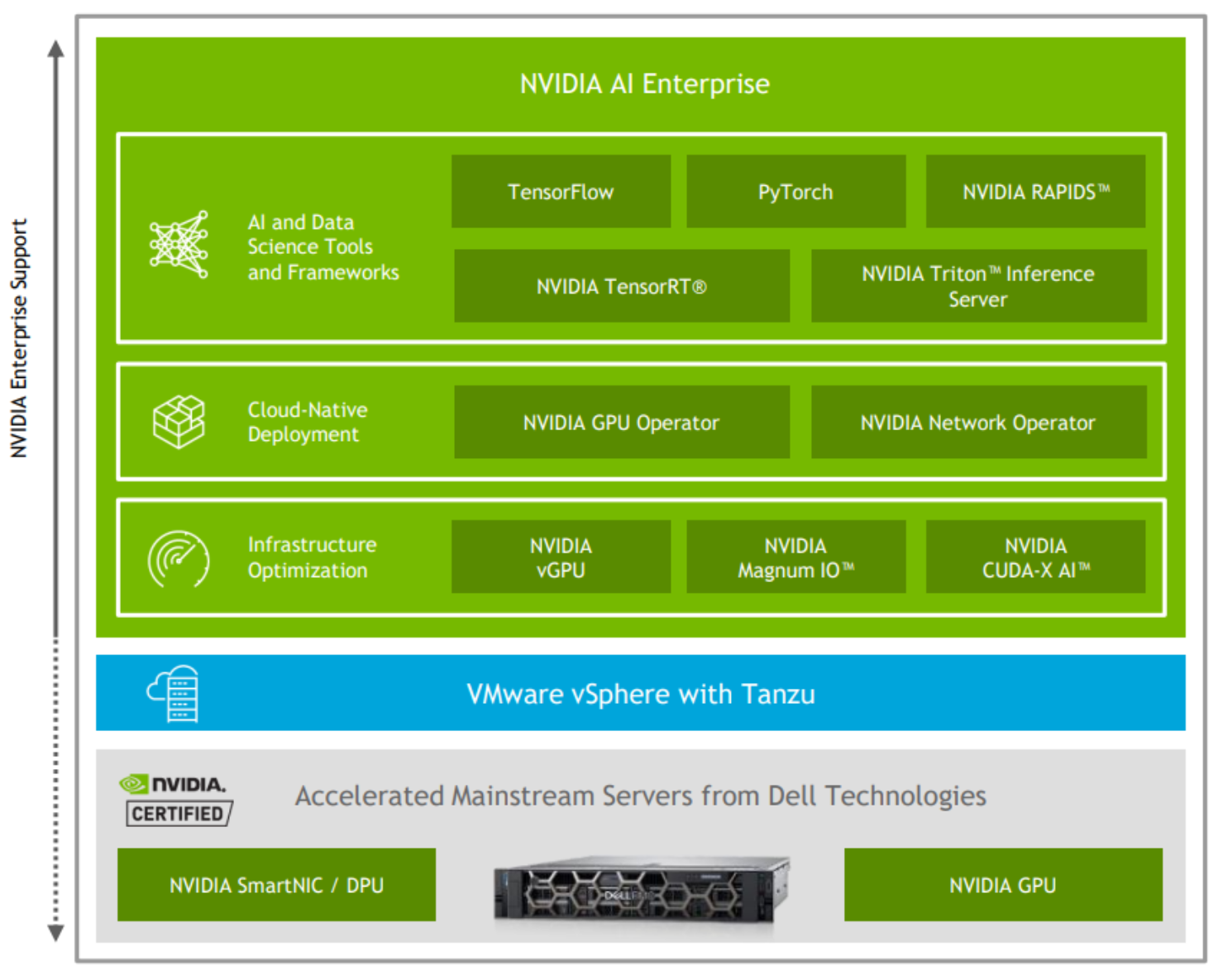

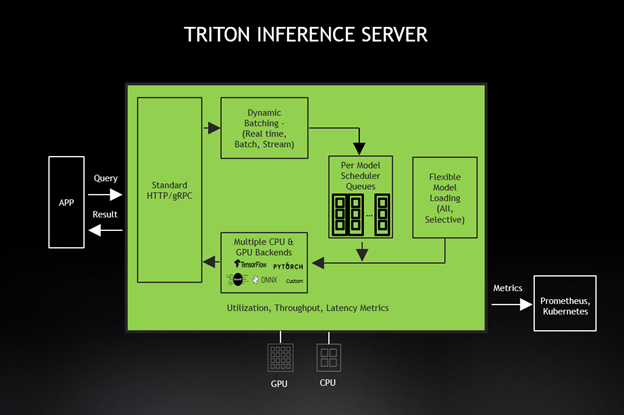

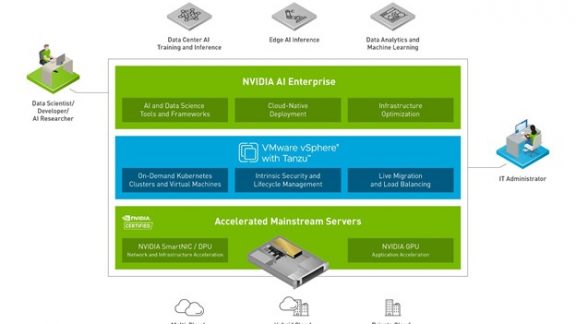

NVIDIA, White Paper - Virtualizing GPUs for AI with VMware and NVIDIA Based on Dell Infrastructure

VMware Performance (@vmwarevroom) / X

Leading VMware Performance Results for Machine Learning Apps Using NVIDIA AI Enterprise on vSphere

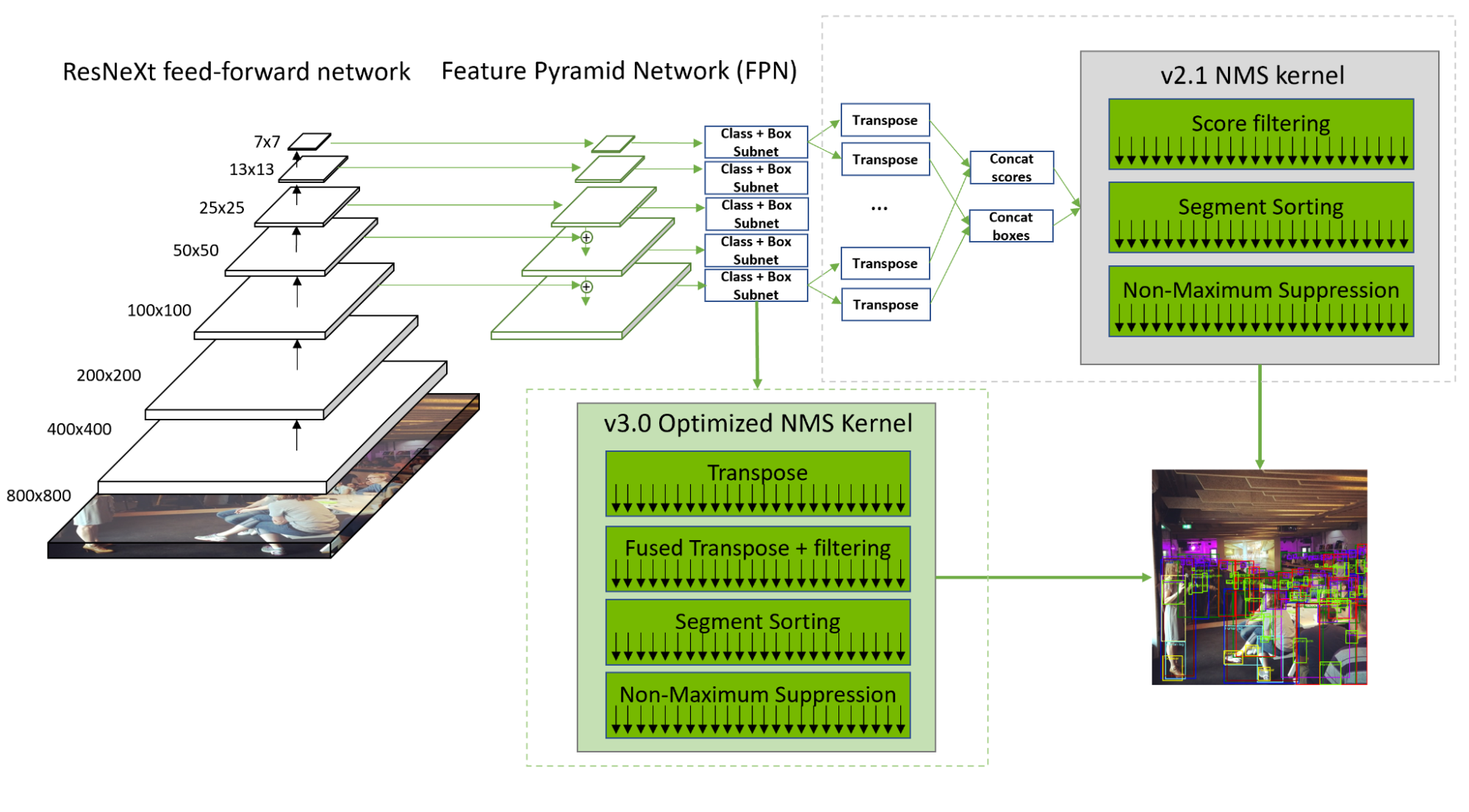

Setting New Records in MLPerf Inference v3.0 with Full-Stack Optimizations for AI

VROOM! Performance Blog – Page 2 – from VMware's performance engineering team

Release Notes - NVIDIA Docs

VMware Performance (@vmwarevroom) / X

NVIDIA, White Paper - Virtualizing GPUs for AI with VMware and NVIDIA Based on Dell Infrastructure

MLPerf Inference 3.0 Highlights - Nvidia, Intel, Qualcomm and…ChatGPT

vSphere Archives - VROOM! Performance Blog

Winning MLPerf Inference 0.7 with a Full-Stack Approach

No Virtualization Tax for MLPerf Inference v3.0 Using NVIDIA Hopper and Ampere vGPUs and NVIDIA AI Software with vSphere 8.0.1 - VROOM! Performance Blog

de

por adulto (o preço varia de acordo com o tamanho do grupo)